Zonefs file system for zoned storage devices will land with the release of Linux 5.6. Here’s what you need to know.

In this blog:

- Another Linux Filesystem?

- Zoned Block Devices

- About Zonefs

- Links to documentation files

For the past few years, we’ve been extremely busy contributing to the Linux kernel. Whether it’s supporting the RISC-V architecture, to storage stack enhancements and SMR support, and now a new Linux file-system – Zonefs!

This last achievement is a great milestone, not just because landing a new file system in the Linux kernel is difficult, but also because it’s opening up new possibilities for developers who want to take advantage of zoned block devices.

Wait, Another Linux Filesystem?

So first, let’s set the stage right. Zonefs is not a general-purpose file-system like the famous XFS or ext4. Legacy, unmodified applications will not be able to take advantage of zonefs.

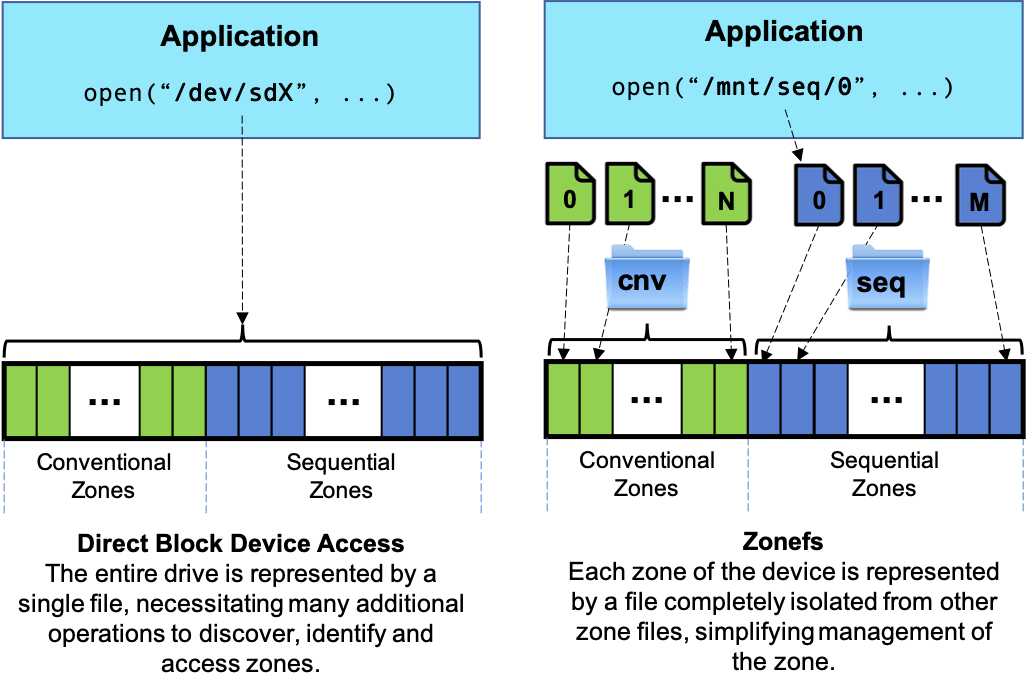

Rather, Zonefs is a highly specialized file system for zoned storage devices (SMR HDDs and ZNS SSDs). What Zonefs allows is to simplify the implementation of zoned block devices support in applications by replacing raw block device file accesses with the richer set of regular file system calls. This means, for instance, that developers do not have to rely on direct block device file ioctls, which can be far more obscure.

Zonefs exposes each zone of a zoned block device as a regular file, similar to traditional file-systems, and directly maps the device zone write constraints onto the file. Files representing sequential write required zones of the device have to be written sequentially. The following figure illustrates zonefs principle.

Who Needs Zoned Block Devices Anyways?

The motivation for Zoned Block Device technology is driven by at-scale data centers that require utmost efficiency, particularly companies providing cloud services and cloud-based applications. As our world quickly marches to zettabyte scale, we’ll be seeing more businesses needing to adopt this technology in order to keep up with the growth of data and to efficiently manage system capacity and performance.

Yes, zoned storage is not just about a capacity play.

On the one hand, SMR hard drives are being adopted by cloud service companies to achieve better cost/capacity footprint at scale. That’s because SMR drives can store considerably more data than conventional drives. What this translates to at exabyte scale is significant savings in hardware, power, cooling, and management complexity. Dropbox is a great example of the long-term payoff of SMR technology. They were able to add hundreds of petabytes of new capacity at a significant cost savings, even while upgrading CPUs and increasing network bandwidth.

On the other side of the equation we have NVMe™ Zoned Namespace (ZNS) SSDs. Although these operate in a concept similar to that of SMR drives, they offer other benefits, due to the nature of Flash technology.

ZNS SSDs are lean and mean. By moving most of the drive management from the FTL to the software stack you gain extra space (in some cases as large as 28%), lower costs (as these drives require less DRAM), and higher performance that can take advantage of the inherently lower latency and higher bandwidth of Flash.

What to Expect with Zonefs

Similarly to a direct zoned block device access, zonefs requires the application to sequentially write data to files. This basic constraint of zoned block devices is not handled transparently by zonefs like a regular file system would do. Zonefs strength resides in the simplification of the disk space management it brings and in the protection it provides against data corruption due to application errors.

- Simpler interface: With the exception of the sequential write constraint, many aspects of zoned block device management are integrated into zonefs. This allows a simpler application code which does not need, for instance, to implement a complex I/O error recovery procedure (e.g. zone condition and write pointer position checks on read or write error).

- Explicit zone open/close management, which can provide some performance improvements for some devices can also be automatized by linking these operations with a file open/close operation performed by the application.

- Finally, the new zone append write command defined by ZNS can be transparently supported using standard write() or asynchronous I/O system calls. While zonefs initial version does not yet implement zone append and zone open/close management, a solid I/O error detection and handling implementation is already in place.

- Isolation: The abstraction of each zone of a zoned block device with a file creates isolation between zones since an I/O operation for a file can only be issued to the zone it represents. Application errors leading to invalid write offsets for instance cannot corrupt other zones. This isolation also applies to destructive operations such as zone reset, which is linked to a regular file truncate() system call.

Overall, zonefs file interface enables simplifying application changes to support zoned block devices. A typical example of this are key-value store databases relying on a log-structured merge tree data structure (LSM-tree). Examples of such applications are the famous RocksDB and LevelDB key-value stores. Since LSM-tree key-value store tables are always written sequentially, Zonefs files can be used to replace a regular file system without needing extensive application modifications. Performance is also improved as the overhead of zonefs is much lower than that of a full POSIX compliant file system such as ext4 or XFS.

Making Zoned Storage Adoption Easier

Overall, the adoption of SMR HDDs and ZNS SSDs allows for a leaner operating efficiency at every level. As data growth will continue to explode, this way of simplifying the stack will be critical to managing data at scale. We’re working hard to make the transition to zoned storage easier to adopt and use. Zonefs is one step forward in making that happen.

Learn More

Zoned Storage:

http://zonedstorage.io

Linux kernel documentation file for zonefs:

https://www.kernel.org/doc/Documentation/filesystems/zonefs.txt

Western Digital’s Zoned Storage Initiative:

https://www.westerndigital.com/company/innovations/zoned-storage