“Do you know the way to San Jose?” Burt Bacharach and Dionne Warwick did, and so did over 10,000 tech industry players and customers who packed into the San Jose Convention Center for NVIDIA GTC, a global AI conference that ran this week. This was the first in-person GTC since before the pandemic, so there was a lot of pent-up excitement bolstered by the explosion of artificial intelligence over the last year. Lenovo made headlines at the show by launching the most complete portfolio of generative AI systems built on NVIDIA GPUs, networking, and software in the industry. With new announcements across the portfolio, new AI solutions and services, and breakout sessions that challenged the industry to truly deliver “Smarter AI for All,” Lenovo continued its AI momentum that began at TechWorld last October with Lenovo Chairman and CEO Yuanqing Yang’s announcement of Hybrid AI during a keynote with NVIDIA founder and CEO Jensen Huang.

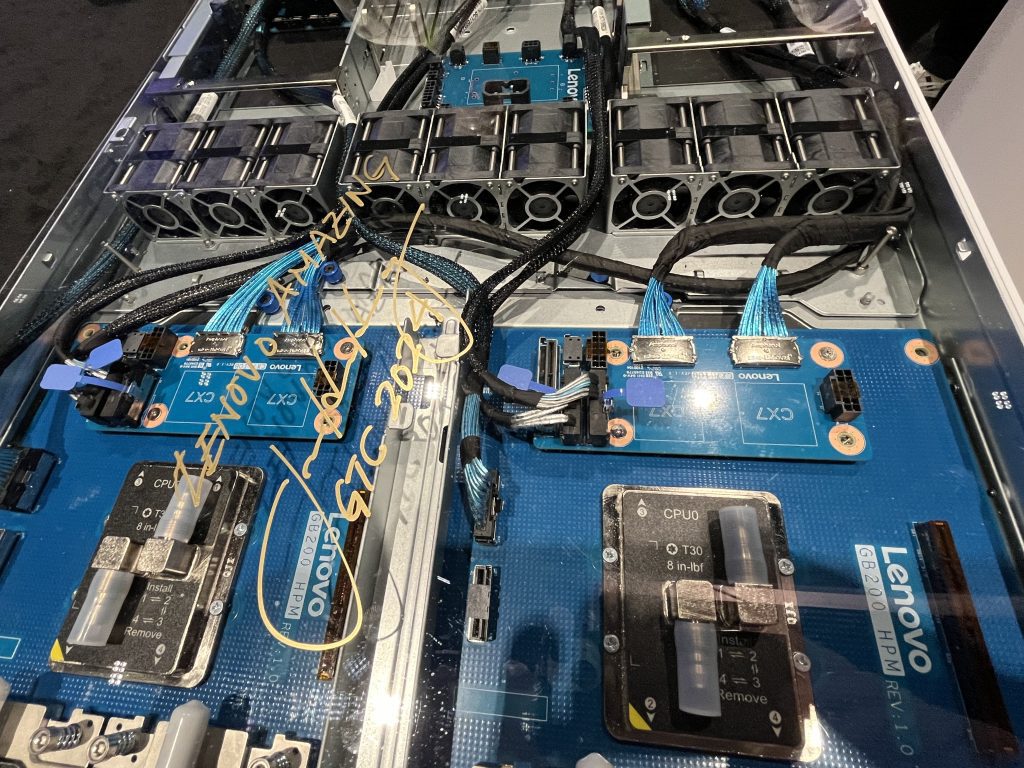

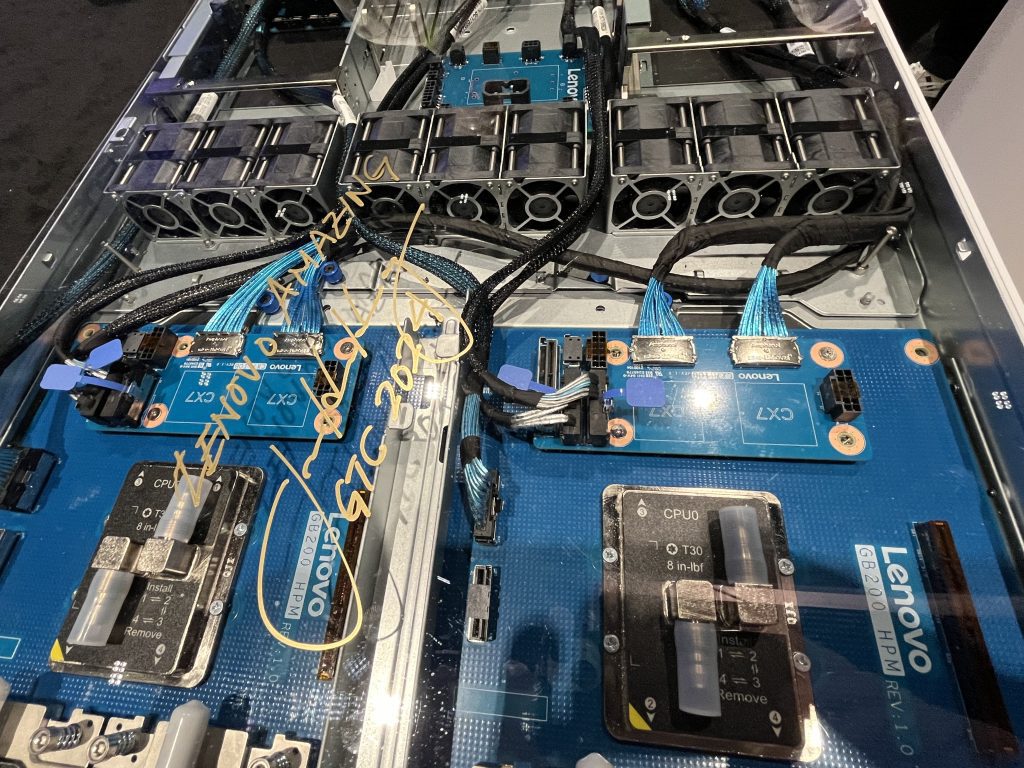

At GTC, Lenovo got things off to a rousing start with the launch of not one but two full-stack AI systems designed to deliver large language model (LLM) and machine learning capabilities to customers ranging from cloud providers to enterprises across industries to High Performance Computing (HPC) labs. Built to deliver massive computational power, the Lenovo ThinkSystem SR680a V3 and ThinkSystem SR780a both support eight NVIDIA H100 and H200 Tensor Core GPUs, as well as the newly announced NVIDIA B100 and B200 Tensor Core GPUs, interconnected with the super-high-speed NVIDIA NVLink interconnect fabric.

- The ThinkSystem SR780a features Lenovo Neptune™ liquid-cooling technology, with direct water cooling of the CPUs, GPUs and the NVLink switch in a dense, 5U package. Compared to air cooling, liquid cooling is a much more efficient method of heat removal, so the ThinkSystem SR780a will run at peak performance for sustained periods of time without hitting thermal limits.

- The ThinkSystem SR680a is an 8U, air-cooled system featuring Intel CPUs, designed to deliver maximum acceleration for the complex workloads around generative AI like model development and machine learning. Engineered to fit into an industry-standard 19-inch rack, the ThinkSystem SR680a integrates quickly and easily into existing data center infrastructure.

Keep watching this space, because we will have more announcements in the first half of this year that will give Lenovo customers the broadest choice of accelerated systems in the industry.

For those who provide AI capabilities to others via the cloud, Lenovo made several key announcements. Lenovo is partnering with NVIDIA to build accelerated models faster using the NVIDIA MGX modular reference architecture, supported by NVIDIA’s full software stack, including NVIDIA AI Enterprise. Leveraging these MGX-based designs, cloud service providers receive customized models faster with the delivery of accelerated computing for AI workloads economically and at scale. These systems include:

- New Lenovo HG630N – MGX 1U – an open standard server with Lenovo Neptune™ direct liquid cooling to reduce power consumption while supporting the highest-performing GPUs.

- New Lenovo HG650N – MGX 2U – a highly modular, GPU-optimized system that is air-cooled, supports industry standard racks, and enables NVIDIA GH200 Grace Hopper Superchip deployments.

- New Lenovo HG660X V3 – MGX 4U – a system that supports up to 8 double-width 600W NVIDIA GPUs in an air-cooled environment. Ideal for workloads using NVIDIA OVX systems and AI, the MGX 4U was designed by Lenovo in collaboration with NVIDIA.

- New Lenovo HR650N – MGX 2U – a high-performing ARM CPU server with multiple cores and flexibility for storage and Front IO, leveraging the NVIDIA Grace CPU Superchip and supporting DPU

Our AI innovation showcased at GTC wasn’t limited to hardware and software. In collaboration with NVIDIA, Lenovo Professional Services announced solutions and offerings designed to both help customers understand what they’ll need for AI within their enterprise, as well as to fast-track the development and deployment of their vision for a faster time to implementation. These include:

- AI Discover – Helps customers uncover the “art of the possible” for AI. By conducting interactive workshops and assessments, looking across the entire ecosystem, and mapping out the AI strategy, Lenovo builds the blueprint for AI success.

- Generative AI services with NVIDIA – Lenovo unveiled professional services focused on generative AI and retrieval-augmented generation, or RAG. Providing thoughtful guidance on the journey from development to deployment, Lenovo professionals leverage experience to deliver powerful data insights for solutions that capture competitive advantage with generative AI. Lenovo offers full-stack solutions to support the complete product lifecycle, plus services to implement, adopt, and scale generative AI solutions based on reference architectures developed in collaboration with NVIDIA.

- Enhanced Professional Services for AI – Helps customers accelerate their AI transformation by providing business advisors, data scientists, and AI-optimized infrastructure-as-a-service, ensuring a seamless utilization of sustainable AI.

Lastly, Lenovo conducted a breakout session with the Scott-Morgan Foundation showcasing our work with them to develop an avatar for Erin, a young woman diagnosed with ALS. The avatar will help allow Erin to communicate, in her own voice, when the world after ALS makes communication challenging. Responsible AI is about using this powerful technology to truly deliver “Smarter AI for All” and solve our greatest challenges.